Edge Computing: Why 50ms of Latency Can Kill

Alex Rivera

February 13, 2026

Every time you ask a voice assistant a question, stream a video, or check a traffic app, your request travels to a data center that might be hundreds or thousands of miles away. The data center processes the request and sends the answer back. This round trip happens so fast — usually in tens of milliseconds — that you barely notice it. For most applications, this cloud computing model works perfectly well.

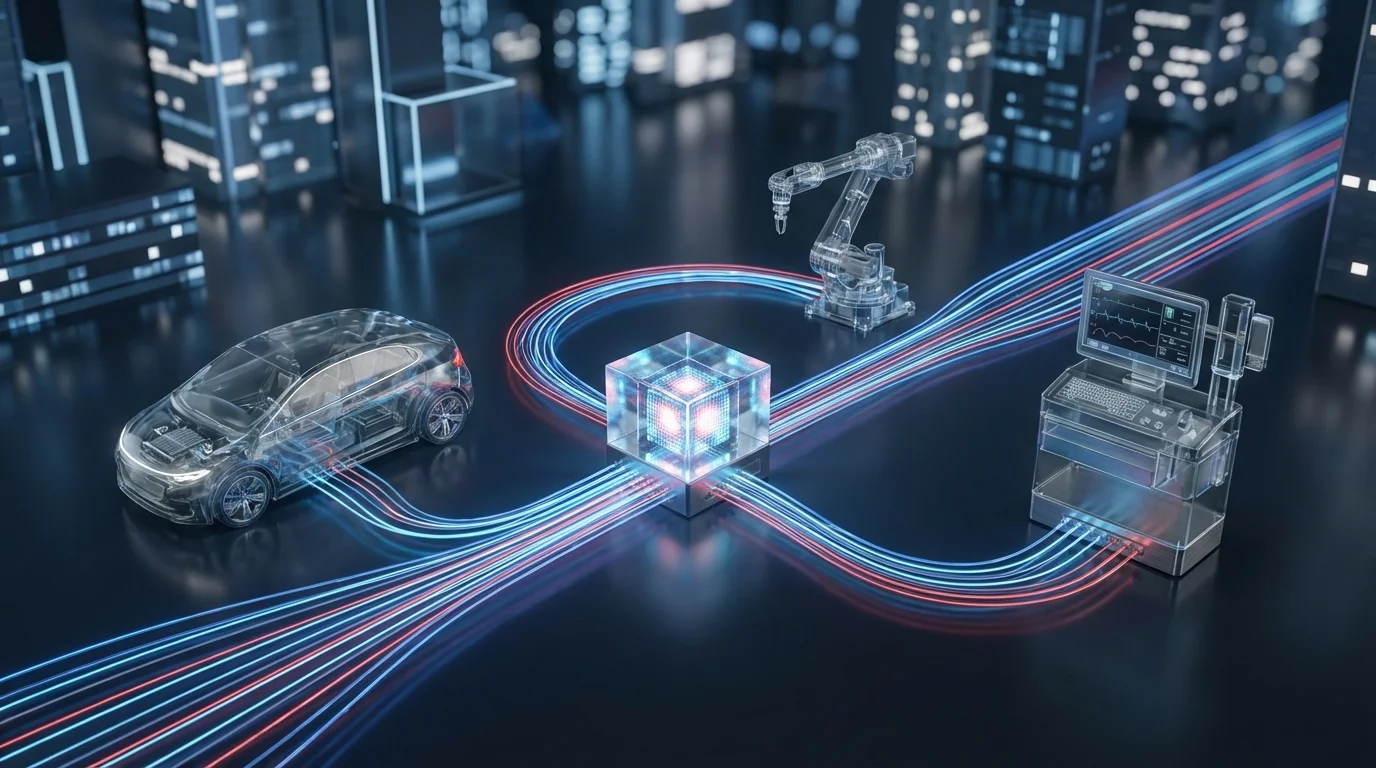

But for a growing class of applications, those milliseconds matter enormously. An autonomous car cannot afford to wait 50 milliseconds for a cloud server to decide whether the shape ahead is a pedestrian. A surgeon using robotic tools needs zero perceptible delay between their hand movements and the robot's response. A factory robot operating at high speed needs to detect anomalies and react in real-time, not after a round trip to a distant server.

Edge computing solves this problem by moving computation closer to where data is generated and where actions need to happen. Instead of sending everything to a centralized cloud, edge computing processes data at or near its source — in the factory, at the cell tower, in the vehicle, or on the device itself.

This article explains what edge computing is, why it matters, how it relates to cloud computing, where it is being deployed today, and what it means for the future of technology infrastructure.

What Edge Computing Actually Is

The Simple Definition

Edge computing is the practice of processing data near the source of the data rather than in a centralized data center. The "edge" refers to the edge of the network — the physical locations where devices connect to the internet and where the digital world meets the physical world.

Think of it this way. Cloud computing is like a library: all the books (data and processing power) are in one central location, and you have to travel there to use them. Edge computing is like having a bookshelf in every room of your house: the most-needed resources are immediately available where you need them, and you only go to the library for things your home bookshelf does not have.

The Edge Computing Spectrum

Edge computing is not a single technology but a spectrum of approaches:

On-device processing: The most extreme edge. Your smartphone's processor handles face recognition locally without sending your photo to a server. Your smartwatch processes health data on your wrist. This is the closest computation can get to the data source.

Edge servers: Small computing installations at or near the data source. A factory might have an edge server on the production floor that processes sensor data in real-time. A hospital might have edge servers that process imaging data locally before sending summaries to the cloud.

Micro data centers: Small, self-contained computing facilities deployed at the edge of the network. Telecommunications companies are deploying these at cell tower sites to provide low-latency processing for 5G applications.

Regional edge: Medium-scale computing facilities located in metropolitan areas, closer to users than traditional cloud data centers but more capable than micro data centers. Companies like AWS, Azure, and Cloudflare operate regional edge facilities across hundreds of locations globally.

Each level of the spectrum trades off between proximity (lower latency) and capability (more processing power). The art of edge architecture is placing computation at the right level for each application's needs.

Why Edge Computing Matters

The Latency Problem

Latency — the time it takes for data to travel from source to processor and back — is the primary driver of edge computing. The speed of light imposes a hard physical limit on how fast data can travel through fiber optic cables: roughly 200 kilometers per millisecond. No amount of engineering can break this limit.

For a user in Tokyo accessing a cloud data center in Virginia, the minimum round-trip time due to physics alone is approximately 70 milliseconds. Add in routing, processing, and network congestion, and real-world latency is typically 100-200 milliseconds.

For web browsing and email, 200 milliseconds is imperceptible. For real-time control of physical systems — vehicles, robots, surgical instruments, industrial equipment — it is unacceptable. Many edge computing applications require latency under 10 milliseconds, and some require sub-millisecond response times.

Edge computing achieves this by processing data where it is generated, reducing the distance data must travel to near zero.

The Bandwidth Problem

The volume of data generated by connected devices is growing exponentially. A single autonomous vehicle generates roughly 20 terabytes of data per day from its cameras, lidar, radar, and other sensors. A modern factory with thousands of IoT sensors can generate petabytes per year. Sending all of this data to the cloud for processing would be prohibitively expensive and would overwhelm network capacity.

Edge computing addresses this by processing data locally, extracting relevant insights, and sending only the results to the cloud. Instead of streaming 20 terabytes of raw sensor data from a vehicle, the edge processor sends summaries: "pothole detected at this GPS coordinate," "brake pads worn to 30% capacity," "traffic pattern suggests alternate route." This reduces bandwidth requirements by orders of magnitude.

The Reliability Problem

Cloud computing requires a network connection. If the connection fails, cloud-dependent applications stop working. For consumer apps, this is an inconvenience. For industrial systems, medical devices, or autonomous vehicles, it can be dangerous.

Edge computing provides resilience by ensuring that critical processing can continue even when cloud connectivity is interrupted. A factory's safety systems continue monitoring and responding. An autonomous vehicle continues operating. A hospital's real-time monitoring continues alerting staff to critical changes.

The Privacy and Sovereignty Problem

Sending data to the cloud means sending it to a specific jurisdiction, often in a different country. Privacy regulations like GDPR in Europe, LGPD in Brazil, and various data localization laws increasingly restrict where data can be processed.

Edge computing allows data to be processed where it is generated, within the same jurisdiction and often on premises controlled by the data owner. Sensitive medical data never needs to leave the hospital. Manufacturing intellectual property stays on the factory floor. Personal data can be processed on the user's device without ever being transmitted.

Edge Computing and 5G: A Symbiotic Relationship

Why 5G Needs Edge Computing

5G networks promise dramatically higher speeds, lower latency, and the ability to connect millions of devices per square kilometer. But 5G's ultra-low-latency promise — sub-10-millisecond round trips — is impossible to deliver if the data still has to travel to a distant cloud data center.

This is where edge computing becomes essential. By deploying computation at the base of 5G cell towers, telecommunications companies can deliver on 5G's latency promises. The data travels a short distance to a nearby edge server rather than across the country to a cloud data center.

Why Edge Computing Needs 5G

Conversely, 5G enables edge computing scenarios that were previously impractical. The high bandwidth and low latency of 5G make it possible to stream data from mobile devices to nearby edge servers at speeds that enable real-time processing.

Consider a fleet of delivery drones. Each drone has limited onboard computing power due to weight and power constraints. With 5G and edge computing, the drones can offload complex computations — route optimization, collision avoidance, package identification — to nearby edge servers while maintaining the real-time responsiveness needed for safe flight.

Deployment Status in 2026

By 2026, all major telecommunications companies have deployed Multi-access Edge Computing (MEC) at thousands of cell tower sites worldwide. Verizon, AT&T, T-Mobile, Deutsche Telekom, and their counterparts in Asia have partnered with cloud providers to offer edge computing as a service alongside 5G connectivity.

The applications running on these edge networks include industrial IoT platforms, connected vehicle infrastructure, real-time video analytics, and mobile gaming with cloud rendering. The market is growing rapidly, though it is still in relatively early stages compared to the mature cloud computing market.

Real-World Edge Computing Use Cases

Autonomous Vehicles

Autonomous vehicles are perhaps the most demanding edge computing application. A self-driving car must process data from dozens of sensors — cameras, lidar, radar, ultrasonic — and make driving decisions in milliseconds. Waiting for a cloud server to process this data is simply not an option.

Current autonomous vehicles perform the vast majority of their computation on board, using specialized processors from companies like Nvidia, Mobileye, and Qualcomm. These onboard edge systems process terabytes of sensor data per day, running complex AI models that detect objects, predict movements, and plan paths in real-time.

But autonomous vehicles also benefit from infrastructure-level edge computing. Edge servers at intersections can aggregate data from multiple vehicles and traffic sensors, detect hazards that no single vehicle can see, and communicate warnings to approaching vehicles. This vehicle-to-infrastructure (V2I) communication, processed at the edge, adds a layer of safety beyond what any individual vehicle can achieve alone.

Smart Manufacturing

Manufacturing has been one of the earliest and most successful adopters of edge computing. Factories operate in environments where milliseconds matter, network reliability is critical, and proprietary data must stay on premises.

Edge computing in manufacturing takes several forms:

Predictive maintenance: Sensors on equipment continuously monitor vibration, temperature, pressure, and other indicators. Edge processors analyze this data in real-time, detecting patterns that predict equipment failure before it happens. This allows maintenance to be scheduled during planned downtime rather than after a costly breakdown.

Quality inspection: Computer vision systems on production lines inspect every product in real-time, detecting defects that human inspectors would miss. These systems must operate at production speed — sometimes hundreds of units per minute — which requires edge processing with minimal latency.

Process optimization: Edge analytics continuously adjust manufacturing parameters — temperature, pressure, speed, chemical composition — based on real-time sensor data, optimizing quality and efficiency without human intervention.

Smart Cities

Cities generate enormous volumes of data from traffic sensors, security cameras, environmental monitors, utility meters, and public transit systems. Processing all of this data in the cloud is impractical due to bandwidth costs and latency requirements.

Edge computing enables smart city applications that are responsive and efficient:

Traffic management: Edge processors at intersections analyze real-time traffic flow and adjust signal timing dynamically. Connected vehicles receive real-time routing information from nearby edge servers. Emergency vehicles trigger signal preemption through edge-connected traffic systems.

Public safety: Video analytics at the edge can detect unusual activity, accidents, or emergencies and alert authorities in seconds rather than minutes. The processing happens locally, avoiding the privacy concerns and bandwidth costs of streaming video to centralized servers.

Environmental monitoring: Air quality sensors, noise monitors, and weather stations feed data to edge processors that detect anomalies and trigger alerts in real-time. When pollution levels spike or flooding threatens, nearby systems can respond immediately.

Healthcare

Healthcare edge computing prioritizes two things: real-time responsiveness and data privacy.

Real-time patient monitoring: ICU monitoring systems analyze vital signs at the edge, detecting deterioration patterns and alerting clinicians in real-time. The processing happens on premises, ensuring that critical alerts are not dependent on external network connections.

Medical imaging: AI-powered analysis of X-rays, MRIs, and CT scans can run on edge servers within the hospital, providing preliminary readings in minutes rather than hours. The images and results stay within the hospital's network, maintaining patient privacy and regulatory compliance.

Remote surgery: Robotic surgery systems require sub-millisecond latency between the surgeon's inputs and the robot's movements. Edge computing at the hospital ensures that the processing chain from surgeon to robot to feedback display is as short as possible.

Major Players in Edge Computing

Cloud Provider Edge Services

The major cloud providers have all extended their platforms to the edge:

AWS: Offers a spectrum of edge services including AWS Wavelength (edge computing at 5G cell towers), AWS Outposts (AWS hardware deployed on customer premises), AWS Local Zones (small data centers in metropolitan areas), and AWS IoT Greengrass (edge computing for IoT devices).

Microsoft Azure: Provides Azure Edge Zones (edge computing at network edges), Azure Stack Edge (on-premises edge hardware), and Azure IoT Edge (containerized workloads on edge devices). Microsoft's acquisition of edge-related companies and partnerships with telecom operators has made Azure a strong edge platform.

Google Cloud: Offers Google Distributed Cloud (edge and on-premises computing), Anthos for edge deployments, and tight integration with Android and Tensor Processing Units for on-device AI.

Edge-Native Platforms

Cloudflare Workers: Cloudflare's serverless platform runs code at over 300 edge locations globally, providing sub-50-millisecond latency for most of the world's internet users. While primarily used for web applications, Workers represents a new model of edge computing that is developer-friendly and cost-effective.

Fastly Compute: Similar to Cloudflare Workers, Fastly offers edge computing at CDN locations, optimized for performance-critical web applications.

Akamai: One of the oldest CDN providers, Akamai has evolved its global network into an edge computing platform with capabilities for IoT, media, and security applications.

Hardware and Chipmakers

Nvidia: Produces the Jetson platform of edge AI processors used in autonomous vehicles, robots, and industrial equipment. Nvidia's edge hardware ranges from small modules for drones to powerful systems for autonomous vehicles.

Intel: Provides edge-optimized processors, FPGAs, and the OpenVINO toolkit for deploying AI models at the edge. Intel's edge portfolio targets manufacturing, retail, healthcare, and smart city applications.

Qualcomm: Dominates mobile edge computing through its Snapdragon processors, which power most Android smartphones and an increasing number of IoT and edge devices. Qualcomm's AI Engine provides on-device machine learning capabilities.

Edge Computing vs. Cloud Computing

Complementary, Not Competing

A common misconception is that edge computing will replace cloud computing. It will not. Edge and cloud are complementary layers of a distributed computing architecture, each suited to different tasks:

Edge handles: Real-time processing, latency-sensitive applications, bandwidth reduction, privacy-sensitive data, and operation during network outages.

Cloud handles: Large-scale data analysis, model training, long-term storage, cross-location aggregation, and applications where latency is not critical.

The most effective architectures use both. An autonomous vehicle processes sensor data at the edge in real-time but uploads anonymized driving data to the cloud for model training. A factory runs quality inspection at the edge but sends production statistics to the cloud for trend analysis. A hospital processes patient monitoring at the edge but uses cloud-based AI for long-term health pattern analysis.

The Hybrid Future

The computing landscape in 2026 and beyond is increasingly hybrid: a continuum from on-device processing to edge servers to regional facilities to centralized cloud data centers. Applications dynamically distribute their workloads across this continuum based on latency requirements, bandwidth availability, cost, and privacy needs.

Orchestration platforms that manage workloads across this continuum — deciding what runs where and moving computation as conditions change — are one of the most active areas of development in infrastructure technology.

Challenges and Limitations

Management Complexity

Managing thousands of distributed edge locations is fundamentally more complex than managing a few centralized data centers. Software updates, security patches, hardware failures, and capacity planning all become harder when your infrastructure is spread across thousands of sites.

This operational challenge is the primary barrier to edge computing adoption for many organizations. The technology works, but managing it at scale requires new tools, processes, and expertise.

Security

Every edge location is a potential attack surface. Unlike a centralized data center with robust physical and network security, edge devices often operate in less controlled environments — mounted on cell towers, deployed in factories, installed in retail stores. Physical security, data encryption, secure boot processes, and remote management all require careful implementation.

Standardization

The edge computing ecosystem is fragmented. Different cloud providers, telecom operators, and hardware manufacturers use different APIs, management tools, and deployment models. Applications built for one edge platform are often difficult to port to another.

Industry organizations like the Linux Foundation's LF Edge and the Open Networking Foundation are working on standardization, but the edge computing landscape in 2026 is still less standardized than the cloud computing market.

Cost

Edge computing infrastructure is more expensive per unit of computation than centralized cloud resources. The economic case for edge depends on the value of low latency, bandwidth savings, and data privacy for specific applications. For many workloads, the cloud remains more cost-effective.

The Future of Distributed Computing

Edge AI and Machine Learning

The convergence of edge computing and artificial intelligence is one of the most significant trends in technology. Running AI models at the edge — on phones, in vehicles, on factory equipment — enables intelligent, real-time decision-making without cloud dependency.

Hardware advances are making edge AI increasingly powerful. Specialized AI accelerators from Nvidia, Qualcomm, Apple, and Google now deliver impressive machine learning performance in low-power packages. Model optimization techniques like quantization, pruning, and knowledge distillation make it possible to run sophisticated AI models on edge devices with limited resources.

Autonomous Systems

Edge computing is an enabling technology for the broader trend toward autonomous systems — vehicles, drones, robots, and industrial equipment that operate independently. These systems require real-time intelligence at the edge because they must respond to their environment faster than any remote system can support.

The Programmable Physical World

The ultimate vision of edge computing is a programmable physical world — an environment where computation is embedded everywhere, processing data from billions of sensors, and coordinating responses in real-time. Traffic flows are optimized across entire cities. Energy grids balance supply and demand automatically. Agricultural systems adjust irrigation, fertilization, and pest control based on real-time soil and weather data.

This vision requires edge computing at massive scale, combined with advances in IoT, 5G, AI, and energy efficiency. The individual technologies are ready. The challenge now is integration, standardization, and deployment.

Conclusion

Edge computing represents a fundamental shift in how we think about computing infrastructure. The cloud computing revolution centralized computation for good reasons — efficiency, manageability, economies of scale. Edge computing does not reverse that revolution but extends it, recognizing that some problems are best solved where data is generated and where actions need to happen.

For developers, edge computing opens new categories of applications that were previously impossible — real-time, distributed, and responsive to the physical world. For businesses, it offers lower latency, reduced bandwidth costs, improved reliability, and better data privacy. For society, it enables the autonomous, intelligent, responsive infrastructure that underpins smart cities, autonomous vehicles, and advanced healthcare.

The edge is not a replacement for the cloud. It is the next layer of the computing stack, and understanding it is essential for anyone building technology in the years ahead.